ExtremeXP Framework Components

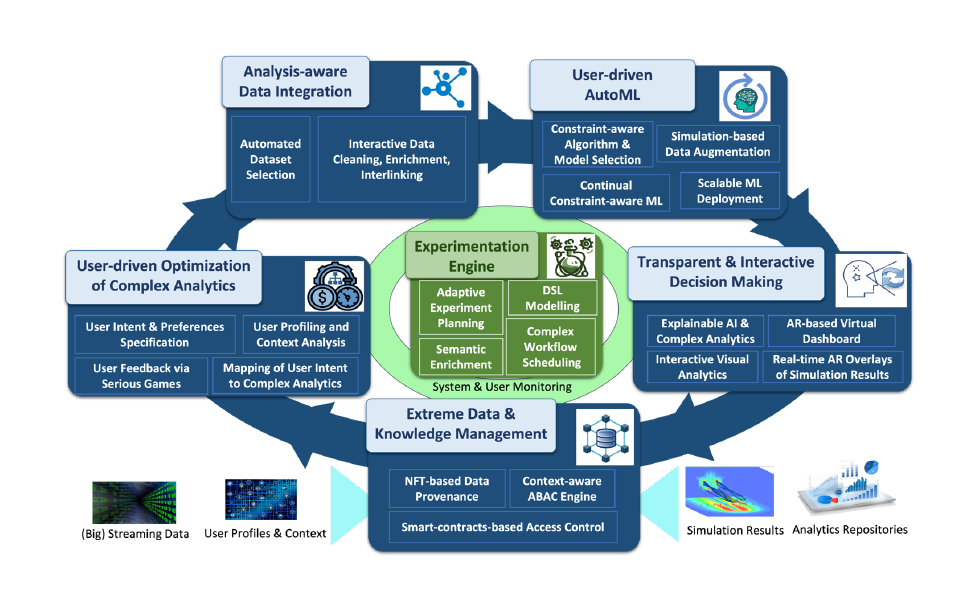

The ExtremeXP framework is built as a modular and interoperable ecosystem designed to support the full lifecycle of continuous adaptive experimentation, integrating human-in-the-loop processes and advanced data-driven decision making.

Framework Architecture Overview

ExtremeXP delivers a comprehensive and modular framework for next-generation continuous adaptive experimentation by combining experiment design, execution, data management, monitoring, and explainability. Below are the main components of the ExtremeXP framework.

Core Components

The ExtremeXP Portal serves as the primary entry point for all ExtremeXP users. Registered users can design and specify workflows and experiments using both :

A Graphical Editor (Experiments tab)

A Textual Editor (Tasks tab, also referred to as the DSL Editor)

The portal centralizes experiment design and provides seamless access to both visual and textual specification tools.

The Experiment DSL Language Server provides syntax and grammar validation for the ExtremeXP DSL directly within the user’s preferred development environment.

Architecture

Server side

Implemented using XText

Runs in Java

Client side

Editor integrations for Visual Studio Code and IntelliJ IDEA

This component ensures reliable, consistent, and error-free experiment definitions.

The ExtremeXP Experimentation Engine is the core component responsible for implementing continuous adaptive experiment planning. It enables researchers and data scientists to :

Define experiments using a simple Domain-Specific Language (DSL)

Execute workflows across multiple backends :

ProActive Scheduler

Kubeflow Pipelines

Local execution

Manage datasets via :

Local storage

Decentralized Data Management (DDM)

Track experiment metadata and results through the Data Abstraction Layer (DAL)

Support human-in-the-loop interaction workflows

The Decentralized Data Management (DDM) component provides a distributed data management system tailored for ExtremeXP, ensuring robust data transport, validation, and insight generation.

Key technologies

Zenoh nodes for decentralized data transport and querying

React frontend

Flask backend

Celery task management

PostgreSQL storage

Ollama integration for dataset-driven insights

Great Expectations for dataset validation

YData Profiling for automated data profiling and reporting

The operation of the ExtremeXP framework requires access to an instance of the ProActive Scheduler, which enables distributed execution of experimental workflows.

Licensing

A separate license from Activeeon is required

An official request must be sent explaining:

Why the license is needed

Who will use it

For what purpose

Technical requirements

Minimum hardware: 4 CPU cores, 8 GB RAM

Minimum RAM usage: 4 GB

Public IP required

TCP ports: 8880 and 33647

ExperimentLens is a lightweight yet powerful visual dashboard for interactive exploration, monitoring, and explainability of complex AI pipelines.

Developed within the ExtremeXP project, ExperimentLens enables researchers, data scientists, and engineers to :

Monitor pipeline lifecycles

Explore results across multiple experimental runs

Inspect configurations and outputs

Gain insights into pipeline behavior and sensitivity

The tool is strongly centered on human-in-the-loop experimentation.

Intents2Workflows translates high-level user-defined analytical intents into actionable and executable workflows.

Process

The user defines an analytical task at a high level

Key features are extracted from the description

The intent is mapped to a rich knowledge base

Ontology-based dependency tracing generates workflows

Workflows are initially encoded in RDF

RDF workflows can be translated into other representations, such as the DSL required by the execution engine

This component provides high flexibility and automation in workflow generation.

- Resolve entities in real-time across heterogeneous and multi-source datasets.

- Leverage attention-based language models to achieve high-precision matching.

- Accelerate deduplication workflows by up to 10x using optimized meta-blocking techniques.

- Optimize resource allocation through the use of clean, unified datasets for critical decision-making.

Optional Components

- a gamification engine, that enables the connected user to display 4 types of dashboards:

- trophees and rewards for all metrics for each experiment, with a manual filter for each experiment,

- a ranking of all experiments, with a manual filter for each metric,

- a ranking of all users of the selected use case, with a manual filter for each metric,

- a reward configurator, accessible only to gamification managers that enables them to configure the rewarding rules.

- API connectors, that connect the gamification module to the metrics API, to retrieve the metrics from each experiment and display them according to parameters configured by the gamification manager.

Experiment Cards are a knowledge management and documentation component designed to support reproducible AI experimentation. By integrating automatically collected metadata with human-in-the-loop input, Experiment Cards document essential experimentation aspects such as intent, constraints, evaluation metrics, outcomes, and lessons learned. They address the limitations of existing documentation approaches, such as Model Cards and Data Cards, which primarily describe static AI artifacts and fail to represent the dynamic and iterative nature of experimentation. Moreover, current practices often overlook the importance of systematically reusing knowledge generated across experimental cycles, leading to fragmented or lost insights.

Functionally, Experiment Cards provide a unified view of experiments and a querying mechanism over executed experiments. They capture experimentation throughout its lifecycle both automatically, via technical components of the ExtremeXP platform when available, and through user interaction.